Open Weight AI Models Explained: Architecture, Use and Tradeoffs

Published 2026-03-14 · AI Education | Data/Infra

Open weight AI models are suddenly everywhere in technical roadmaps and vendor decks, but the term itself is weirdly fuzzy. Are they open source? Kind of. Free? Sometimes. Enterprise‑ready? Potentially amazing – or a governance nightmare – depending on how you handle them. At a high level, an open weight AI model is one where the *trained parameters* (the "weights") are published so you can download, run, and often fine‑tune the model on your own infrastructure. That makes them a powerful middle ground between black‑box closed models and fully open source projects where both code and weights are released under very permissive licenses. They matter now because companies want strong AI capabilities without shipping all their data to a third‑party API or getting locked into a single vendor. Open weight foundation models enable private deployment, closer control over latency and cost, and the option to tailor models to your stack – especially when paired with Nvidia GPUs or other accelerators. They also raise new questions about licensing, compliance, and long‑term maintenance. This explainer walks through what open weight models are, how they differ from open source, where they shine, and where they can quietly wreck your weekend if you don’t plan ahead.

What is [Topic]?

In this context, the topic is open weight AI models. An open weight model is a trained AI model whose weights are publicly released so you can download and run it yourself. Think of the weights as the model’s long‑term memory – the numbers it has learned from training. When those are open, you can: - Host the model on your own hardware - Fine‑tune it on your private data - Integrate it deeply into your stack without going through a third‑party API This is different from a closed model, where you only get a hosted endpoint and never see the internals. Crucially, “open weight” does not automatically mean open source. The code might be open, but the license on the weights can be restrictive: no commercial use, no derivative models, or only allowed on certain platforms. So you get technical openness (you can run it) without full legal freedom (you can’t necessarily use it however you like). If you want powerful foundation models for private deployment, cost control, and tighter data governance, open weight LLMs are one of the most practical options available today.

How It Works

Under the hood, open weight models are trained much like other large language models: 1. Pretraining A model starts with random weights. It’s trained on massive text (and sometimes code or multimodal) datasets to predict the next token. Over billions of training steps, the weights encode patterns about language, structure, and reasoning. 2. Fine‑tuning & alignment After pretraining, the base model may be fine‑tuned on curated instructions, dialogue, or domain‑specific data to make it more helpful and safer for real users. 3. Checkpoint selection The model sponsor picks specific checkpoints (snapshots of weights) that meet quality and safety bars. 4. Release as open weights Those checkpoints are packaged with a model card, basic documentation, and a license. You download the weights and run them using common frameworks (PyTorch, TensorRT, etc.), usually on GPUs. 5. Local inference & adaptation Your infra team wires the model into your serving stack. You might quantize it, shard it across GPUs, or fine‑tune it further for tasks like RAG, code generation, or customer support. The magic of open weight models is that you own the runtime: where they run, how they’re optimized, and how they’re connected to your internal systems.

Real-World Applications

Open weight AI models are especially attractive when you care about data control, latency, or cost predictability. Common use cases: - Enterprise search & RAG Run a foundation model inside your VPC, wire it to your internal docs, and answer questions without sending data to an external API. - Developer assistants Code completion, code review, and in‑IDE chat tools that run on your own GPUs and can be tuned on your codebase. - Customer support bots Use an open weight LLM that knows your products and policies, but keep chat logs and training data entirely on your infra. - Domain‑specific copilots Finance, legal, healthcare, manufacturing – you can fine‑tune models on proprietary data while keeping both the data and the weights private. - On‑prem or edge workloads For regulated industries or low‑connectivity environments, deploying a compact open weight model locally can beat any SaaS API. In short, whenever your security or compliance people say, “We’re not sending *that* over the internet,” open weight models become very interesting.

Benefits & Limitations

Open weight models come with some very real upsides – and a few traps. Benefits - Control over data – Prompts and outputs stay inside your network, which makes security and privacy teams sleep better. - Cost transparency – You pay for hardware and engineering, not per‑token API fees that surprise your CFO. - Customization – Fine‑tune, quantize, or distill the model to fit your domain, latency, and budget. - Portability – In many cases, you can move models between clouds or on‑prem without changing vendors. Limitations - Operational complexity – You now run and scale inference: GPU provisioning, autoscaling, observability, and incident response are your problem. - Upgrades are not automatic – With APIs, you quietly benefit from model improvements. With open weights, you must adopt new checkpoints and re‑validate them. - Hardware requirements – Larger models want serious GPUs; they’re less friendly to small teams without infra experience. - License risk – “Open” can hide restrictive or ambiguous terms. If you’re in a regulated or large enterprise setting, legal review is mandatory. They shine when you have strong infra capabilities and clear governance. They’re overkill if you just want a quick chatbot prototype.

Latest Research & Trends

Two big trends are shaping the open weight ecosystem: vendor investment and specialized reasoning models. First, major hardware and platform players are putting serious money behind non‑closed models. Reporting around Nvidia’s strategy describes roughly $26 billion in investments across data centers, networking, and companies working on open or more accessible models, reflecting a bet that enterprises will want to run powerful models on their own or partner infrastructure rather than rely only on proprietary endpoints. This aligns open weight models with GPU‑rich stacks, where you can tune and serve models close to your data while still leaning on vendor‑provided tooling for performance and orchestration. (Source: https://www.wired.com/story/nvidia-investing-26-billion-open-source-models/) Second, model providers are pushing reasoning‑focused models that can be offered in more flexible ways. OpenAI, for example, introduced two reasoning models aimed at tackling complex, multi‑step problems such as code understanding and math, with an emphasis on reliability and better tool use. While those specific models are exposed via APIs, the broader trend is toward families of models – including reasoning variants – that could plausibly have open‑weight siblings or competitors, giving enterprises more choice for specialized workloads. (Source: https://techcrunch.com/2025/08/05/openai-launches-two-open-ai-reasoning-models/) Taken together, these trends suggest that open weight models will increasingly coexist with hosted reasoning services, with hardware vendors and model labs both incentivized to support more flexible deployment options.

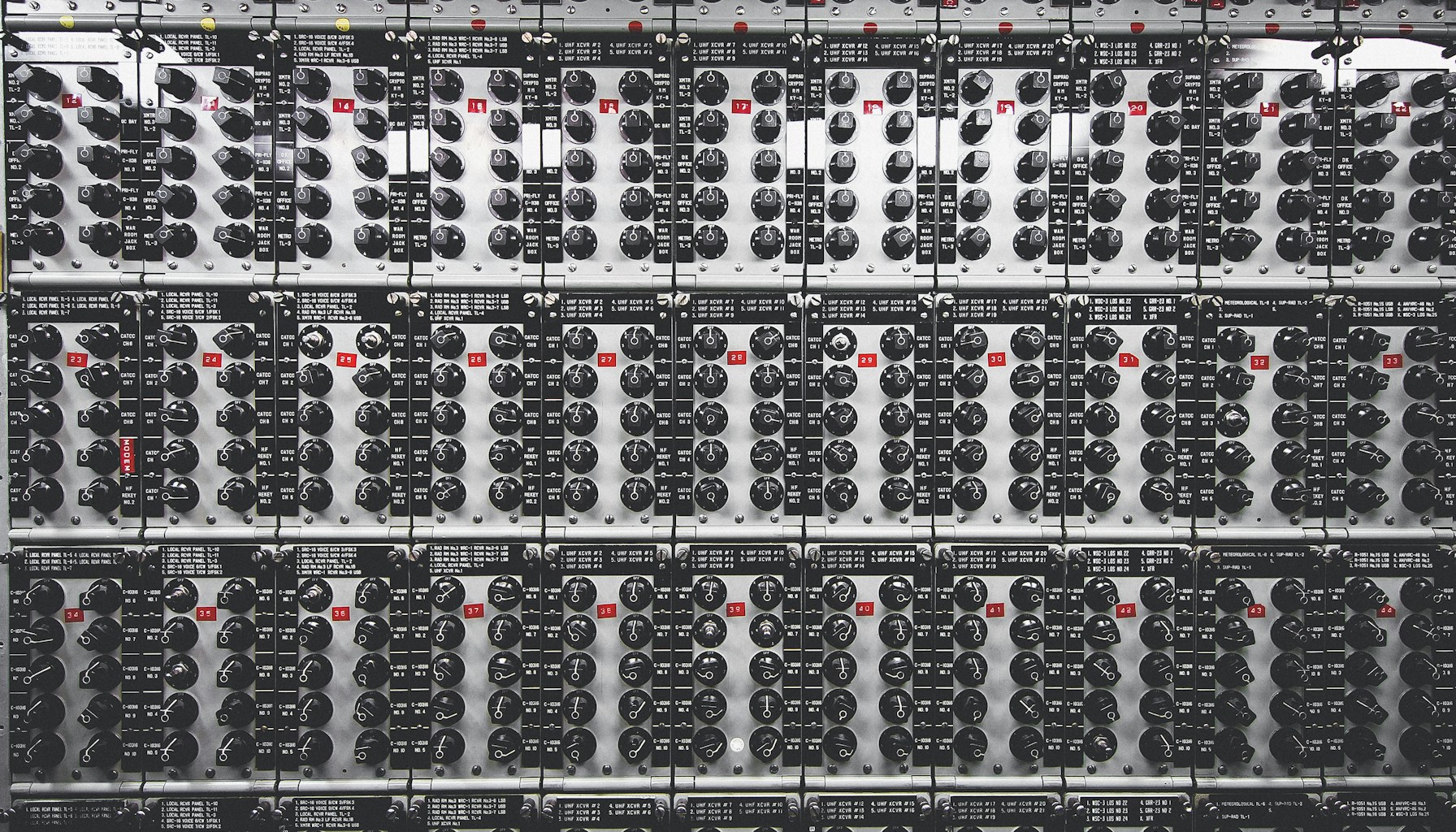

Visual

Glossary

- Weights: The numerical parameters a model learns during training; they encode the model’s knowledge.

- Open Weight Model: A model where the trained weights are published so others can download and run it, usually under a specific license.

- Foundation Model: A large, general-purpose model pretrained on broad data and adaptable to many downstream tasks.

- Inference: Running a trained model to generate outputs (like text) from inputs (like prompts).

- Fine-tuning: Additional training on a smaller, targeted dataset to specialize a pretrained model for a specific domain or task.

- Reasoning Model: A model variant focused on multi-step problem solving and complex tasks, such as math or code understanding.

- GPU (Graphics Processing Unit): A parallel processor widely used to accelerate AI training and inference workloads.

- Model License: The legal terms specifying how you can use, modify, or redistribute model weights and related assets.

Citations

- https://www.wired.com/story/nvidia-investing-26-billion-open-source-models/

- https://techcrunch.com/2025/08/05/openai-launches-two-open-ai-reasoning-models/

Comments

Loading…